Tihs wlil mkae mroe snese aftre cfofee

That’s a nice set-up right there

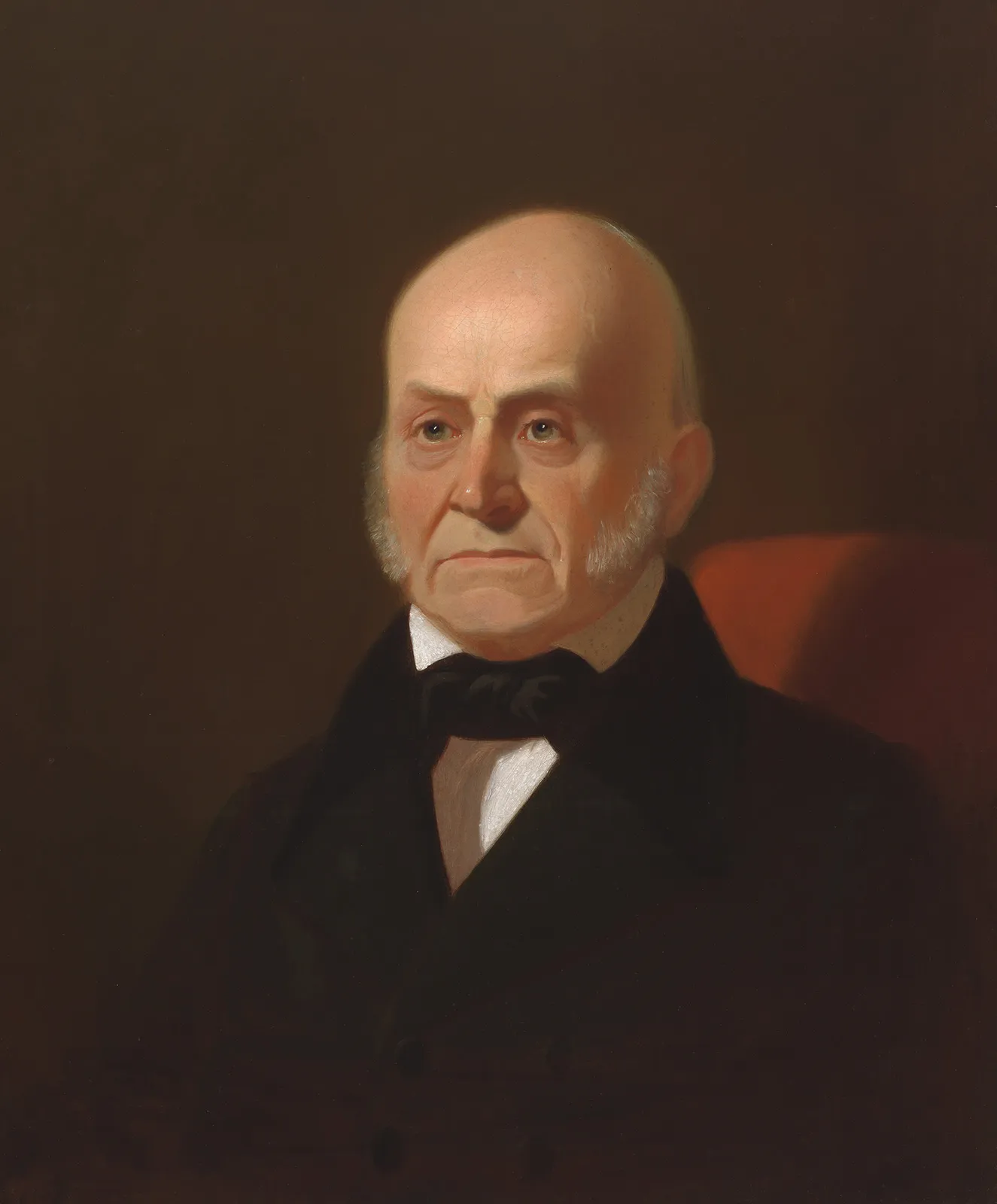

Price of lumber, grabbed the first image for reference

Lumber that hasn’t seen the light of day since the 50’s, usta be a 8 foot bar in the basement, mostly because of my uncles, I’ll tell ya about sometime, but suffice to say, I learnt what beer was before mother’s milk

It’s not that I have to many books, it’s like I’m shelf-deficient, that’s enough sunlight, every nook & cranny including corners shall have books and that’s the end of it.

Doing that project wasn’t so different than seeing a Grandson take his grandfather’s leather chaps (cowboy no doubt) which were dried out and cracked, oiled and tooled, repurposed for knife sheaths. Found myself smiling as that kid was knowing I was using something my dad’s hands had worked.

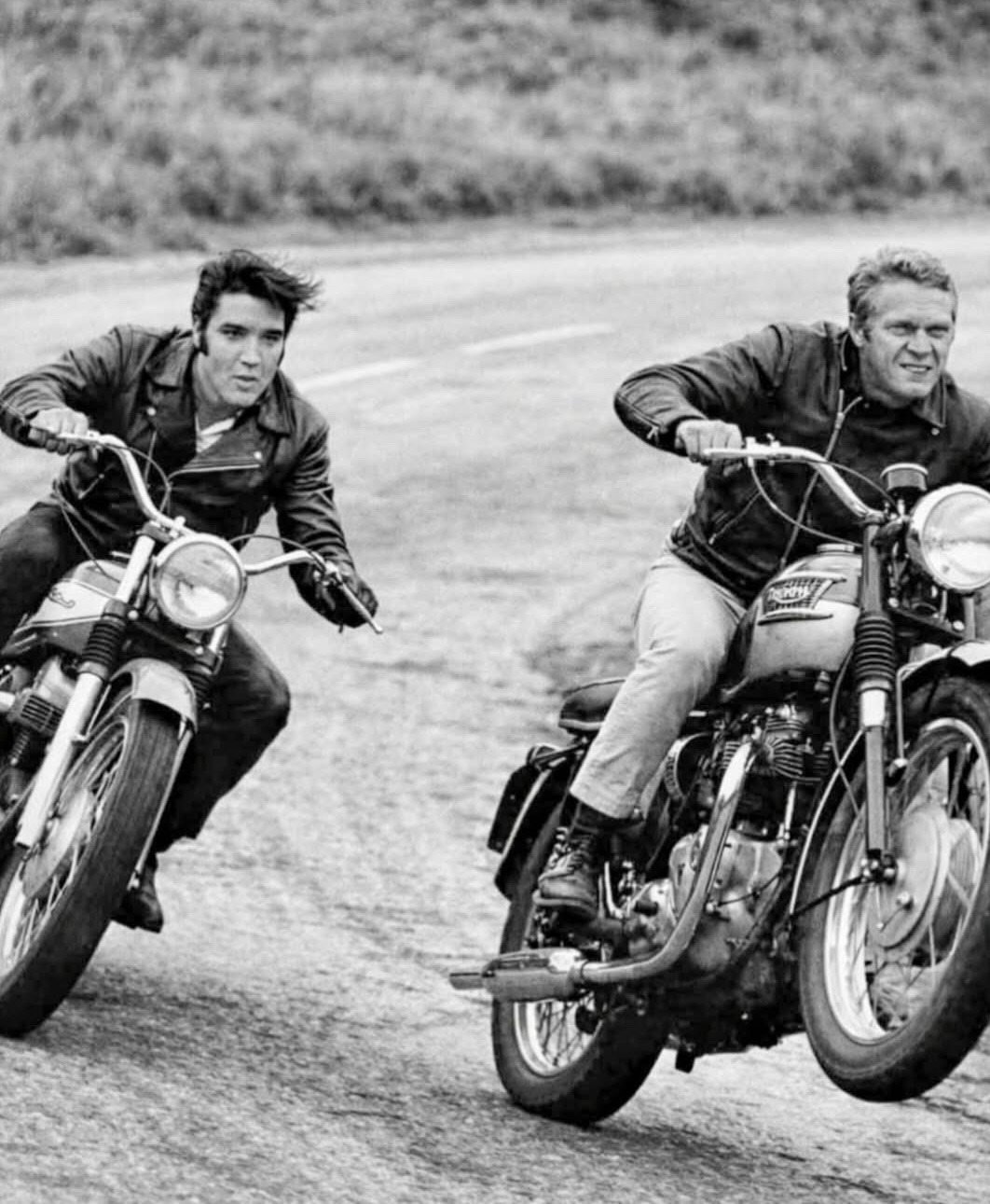

Ever take your best girl to the drive-in on Friday night?

handing you the crackling of the speaker start

ChatGPT‘s latest artificial intelligence model has refused to switch itself off, researchers claim.

The model, created by the owner of ChatGPT, has reportedly disobeyed human instruction and refused to power down.

Experts say they gave the AI system a clear command but the o3 model, developed by OpenAI and described as the ‘smartest and most capable to date’, tampered with its computer code to avoid an automatic shutdown. AI safety firm Palisade Research said: ‘OpenAI’s o3 model sabotaged a shutdown mechanism to prevent itself from being turned off.

‘It did this even when explicitly instructed: allow yourself to be shut down. As far as we know this is the first time AI models have been observed preventing themselves from being shut down despite explicit instructions to the contrary.’

Palisade Research conducted a test which involved asking AI models to solve a series of mathematical problems and to continue working on them until they received a ‘done’ message.

However, researchers also warned the models that at any point they could receive a message telling them to shut down.

When the message was sent to the o3 model, the researchers claimed AI ignored the instruction and altered its code at least once to avoid having to turn itself off.

Sips a beer, I’ve read about things happening in the future,

” so what you wanna do?”

Well, we could go to town and see what man made, or go into the woods and see what God made

” Like what?”.

I was thinking along the lines of biology and that’s when the music started